This week, we learned something disturbing: OpenAI is now being forced to retain all ChatGPT logs, even the ones users deliberately delete.

That includes:

- Manually deleted conversations

- “Temporary Chat” sessions that were never supposed to persist

- Confidential business data passed through OpenAI’s API

The reason? A court order.

The New York Times and other media companies are suing OpenAI over alleged copyright infringement. As part of the lawsuit, they speculated that people might be using ChatGPT to bypass paywalls, and deleting their chats to cover their tracks. Based on that speculation alone, a judge issued a sweeping preservation order forcing OpenAI to retain every output log going forward.

Even OpenAI doesn’t know how long they’ll be required to keep this data.

This is bigger than just one court case

Let’s be clear: OpenAI is not a privacy tool. They collect a vast amount of user data, and everything you type is tied to your real-world identity. (They don’t even allow VoIP numbers at signup, only real mobile numbers.) OpenAI is a fantastic tool for productivity, coding, research, and brainstorming. But it is not a place to store your secrets.

That said, credit where it’s due: OpenAI is pushing back. They’ve challenged the court order, arguing it undermines user privacy, violates global norms, and forces them to retain sensitive data users explicitly asked to delete.

And they’re right to fight it.

If a company promises, "We won’t keep this", and users act on that promise, they should be able to trust it. When that promise is quietly overridden by a legal mandate—and users only find out months later—it destroys the trust we rely on to function in a digital society.

Why this should scare you

This isn’t about sneaky opt-ins or buried fine print. It’s about people making deliberate choices to delete sensitive data—and those deletions being ignored.

That’s the real problem: the nullification of your right to delete.

Private thoughts. Business strategy. Health questions. Intimate disclosures. These are now being held under legal lock, despite clear user intent for them to be erased.

When a platform offers a “Delete” button or advertises "Temporary Chat", the public expectation is clear: that information will not persist.

But in a system built for compliance, not consent, those expectations don’t matter.

I wish this weren’t the case

I want to live in a world where:

- You can go to the doctor and trust that your medical records won’t be subpoenaed

- You can talk to a lawyer without fearing your conversations could become public

- Companies that want to protect your privacy aren’t forced to become surveillance warehouses

But we don’t live in that world.

We live in a world where:

- Prosecutors can compel companies to hand over privileged legal communications (just ask Roger Ver’s lawyers)

- Government entities can override privacy policies, without user consent or notification

- “Delete” no longer means delete

This isn’t privacy. It’s panopticon compliance.

So what can you do?

You can’t change the court order.

But you can stop feeding the machine.

Here’s how to protect yourself:

1. Be careful what you share

When logged onto centralized tools like ChatGPT, Claude, or Perplexity, your activities are stored and linked to a single identity across sessions. That makes your full history a treasure trove of data.

You can still use these tools for light, non-sensitive tasks, but be careful not to share:

- Sensitive information

- Legal or business strategies

- Financial details

- Anything that could harm you if leaked

These tools are great for brainstorming and productivity, but not for contracts, confessions, or client files.

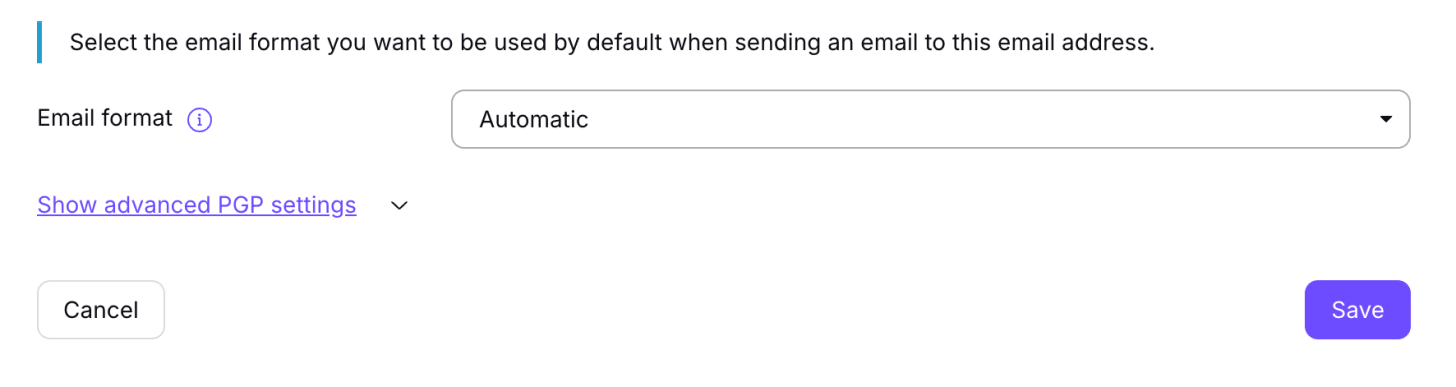

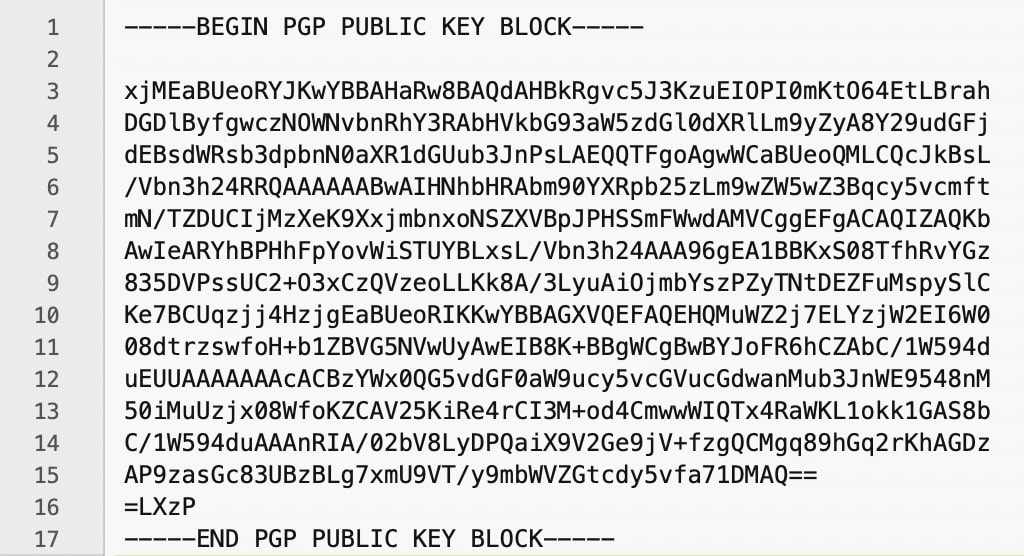

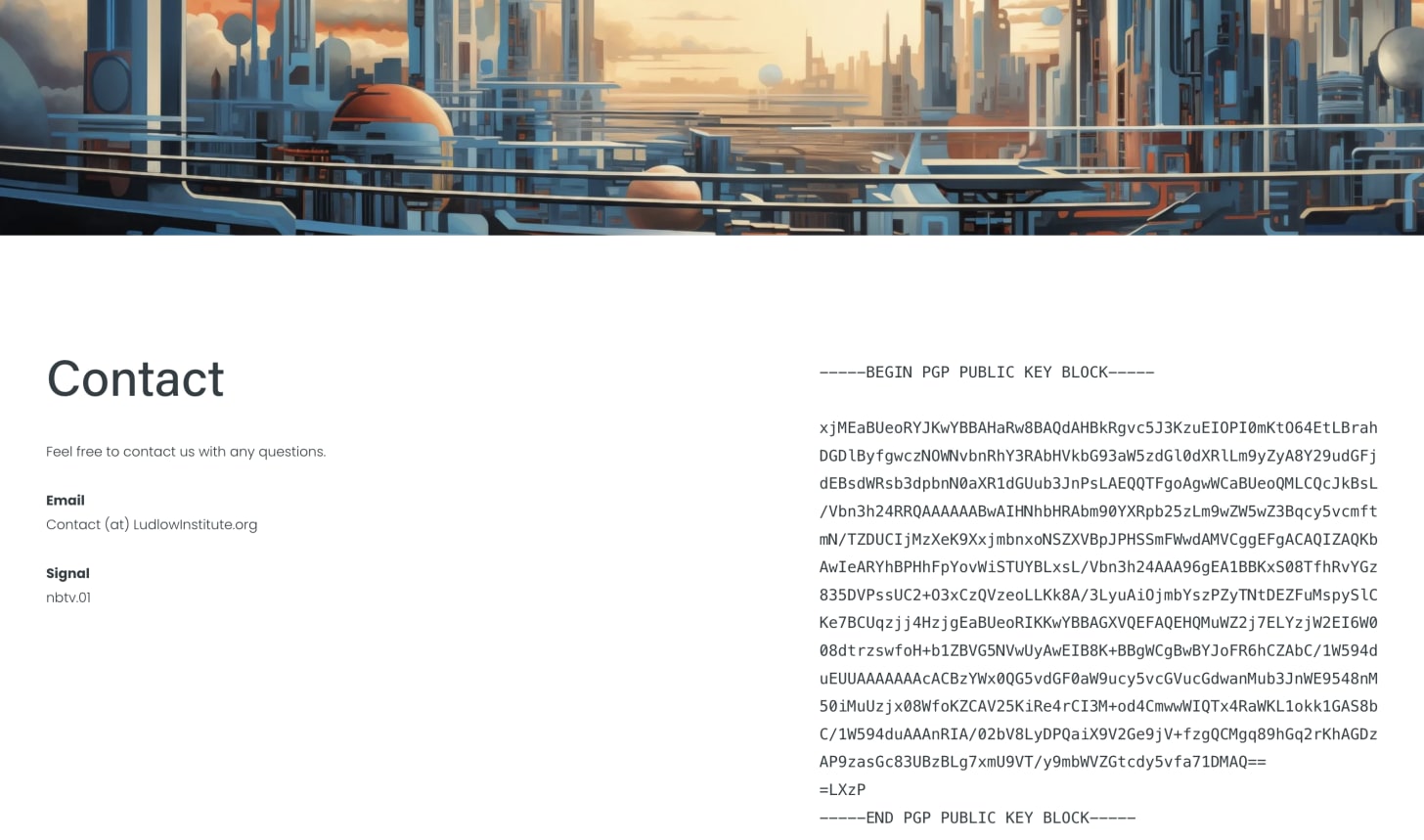

2. Use privacy-respecting platforms (with caution)

If you want to use AI tools with stronger privacy protections, here are two promising options:

(there are many more, let us know in the comments about your favorites)

Brave’s Leo

- Uses reverse proxies to strip IP addresses

- Promises zero logging of queries

- Supports local model integration so your data never leaves your device

- Still requires trust in Brave’s infrastructure

Venice.ai

- No account required

- Strips IP addresses and doesn’t link sessions together

- Uses a decentralized GPU marketplace to process your queries

- Important caveat: Venice is just a frontend—the compute providers running your prompts can see what you input. Venice can’t enforce logging policies on backend providers.

- Because it's decentralized, at least no single provider can build a profile of you across sessions

In short: I trust Brave with more data, because privacy is central to their mission. And I trust Venice’s promise not to log data, but am hesitant about trusting faceless GPU providers to adhere to the same no-logging policies. But as a confidence booster, Venice’s decentralized model means even those processing your queries can’t see the full picture, which is a powerful safeguard in itself. So both options above are good for different purposes.

3. Run AI locally for maximum privacy

This is the gold standard.

When you run an AI model locally, your data never leaves your machine. No cloud. No logs.

Tools like Ollama, paired with OpenWebUI, let you easily run powerful open-source models on your own device.

We published a complete guide for getting started—even if you’re not technical.

The real battle: Your right to privacy

This isn’t just about one lawsuit or one company.

It’s about whether privacy means anything in the digital age.

AI tools are rapidly becoming our therapists, doctors, legal advisors, and confidants. They know what we eat, what we’re worried about, what we dream of, and what we fear. That kind of relationship demands confidentiality.

And yet, here we are, watching that expectation collapse under the weight of compliance.

If courts can force companies to preserve deleted chats indefinitely, then deletion becomes a lie. Consent becomes meaningless. And companies become surveillance hubs for whoever yells loudest in court.

The Fourth Amendment was supposed to stop this. It says a warrant is required before private data can be seized. But courts are now sidestepping that by ordering companies to keep everything in advance—just in case.

We should be fighting to reclaim that right. Not normalizing its erosion.

Final Thoughts

We are in a moment of profound transition.

AI is rapidly becoming integrated into our daily lives—not just as a search tool, but as a confidant, advisor, and assistant. That makes the stakes for privacy higher than ever.

If we want a future where privacy survives, we can’t just rely on the courts to protect us. We have to be deliberate about how we engage with technology—and push for tools that respect us by design.

As Erik Voorhees put it: "The only way to respect user privacy is to not keep their data in the first place".

The good news? That kind of privacy is still possible.

You have options. You can use AI on your terms.

Just remember:

Privacy isn’t about hiding. It’s about control.

About choosing what you share—and with whom.

And right now, the smartest choice might be to share a whole lot less.

Yours in privacy,

Naomi