Swedish streaming giant Spotify promotes anonymous pseudo-musicians and computer-generated music to avoid paying royalties to real artists, according to a new book by music journalist Liz Pelly.

Meanwhile, criticism grows against Spotify CEO Daniel Ek, who recently invested over €600 million in a company developing AI technology for future warfare.

In the book Mood Machine: The Rise of Spotify and the Costs of the Perfect Playlist, Liz Pelly reveals that Spotify has long been running a secret internal program called Perfect Fit Content (PFC). The program creates cheap, generic background music – often called "muzak" – through a network of production companies with ties to Spotify. This music is then placed in Spotify's popular playlists, often without crediting any real artists.

The program was tested as early as 2010 and is described by Pelly as Spotify's most profitable strategy since 2017.

"But it also raises worrying questions for all of us who listen to music. It puts forth an image of a future in which – as streaming services push music further into the background, and normalize anonymous, low-cost playlist filler – the relationship between listener and artist might be severed completely", Pelly writes.

By 2023, the PFC program controlled hundreds of playlists. More than 150 of them – with names like Deep Focus, Cocktail Jazz, and Morning Stretch – consisted entirely of music produced within PFC.

"Only soulless AI music will remain"

A jazz musician told Pelly that Spotify asked him to create an ambient track for a few hundred dollars as a one-time payment. However, he couldn't retain the rights to the music. When the track later received millions of plays, he realized he had likely been deceived.

Social media criticism has been harsh. One user writes: "In a few years, only soulless AI music will remain. It's an easy way to avoid paying royalties to anyone."

"I deleted Spotify and cancelled my subscription", comments another.

Spotify has previously faced criticism for similar practices. The Guardian reported in February that the company's Discovery Mode system allows artists to gain more visibility – but only if they agree to receive 30 percent less payment.

Not only has Spotify been covertly pushing AI artists to “reduce royalty payments” to real human artists — that extra profit is now being poured into an “AI military startup” that will profit off bombing babies.

You don’t hate these people enough. pic.twitter.com/VDpK3gPcX4

— James Li (@5149jamesli) June 29, 2025

Spotify's CEO invests in AI for warfare

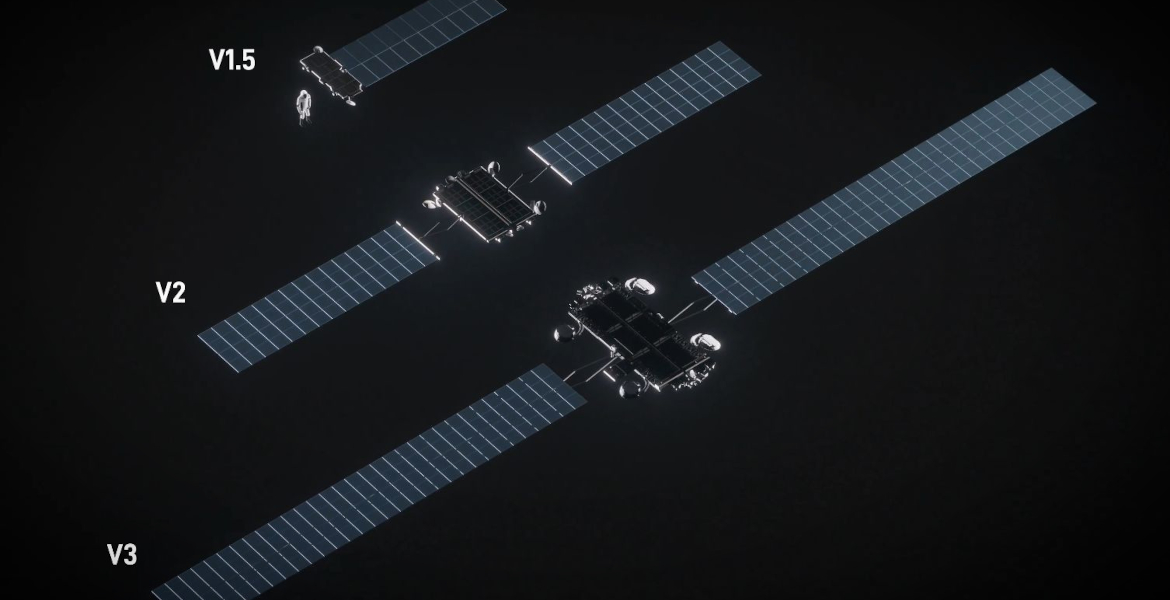

Meanwhile, CEO Daniel Ek has faced severe criticism for investing over €600 million through his investment firm Prima Materia in the German AI company Helsing. The company develops software for drones, fighter aircraft, submarines, and other military systems.

– The world is being tested in more ways than ever before. That has sped up the timeline. There’s an enormous realisation that it’s really now AI, mass and autonomy that is driving the new battlefield, Ek commented in an interview with Financial Times.

With this investment, Ek has also become chairman of Helsing. The company is working on a project called Centaur, where artificial intelligence will be used to control fighter aircraft.

The criticism was swift. Australian producer Bluescreen explained in an interview with music site Resident Advisor why he chose to leave Spotify – a decision several other music creators have also made.

– War is hell. There’s nothing ethical about it, no matter how you spin it. I also left because it became apparent very quickly that Spotify’s CEO, as all billionaires, only got rich off the exploitation of others.

Competitor chooses different path

Spotify has previously been questioned for its proximity to political power. The company donated $150,000 to Donald Trump's inauguration fund in 2017 and hosted an exclusive brunch the day before the ceremony.

While Spotify is heavily investing in AI-generated music and voice-controlled DJs, competitor SoundCloud has chosen a different path.

– We do not develop AI tools or allow third parties to scrape or use SoundCloud content from our platform for AI training purposes, explains communications director Marni Greenberg.

– In fact, we implemented technical safeguards, including a 'no AI' tag on our site to explicitly prohibit unauthorised use.